Benchmarking etcd: The Heartbeat of Kubernetes

Welcome to the "heartbeat" of Kubernetes. How do you benchmark and perform performance testing? Today, we focus on etcd.

Etcd is the consistent and highly available key-value store used as Kubernetes' backing store for all cluster data. If etcd is slow, your entire cluster feels sluggish. API requests time out, and controllers fail to sync.

🧠 About etcd

To optimize something, you must first understand how it works. etcd is a distributed, consistent key-value store that serves as the "brain" of your Kubernetes cluster.

How it Works

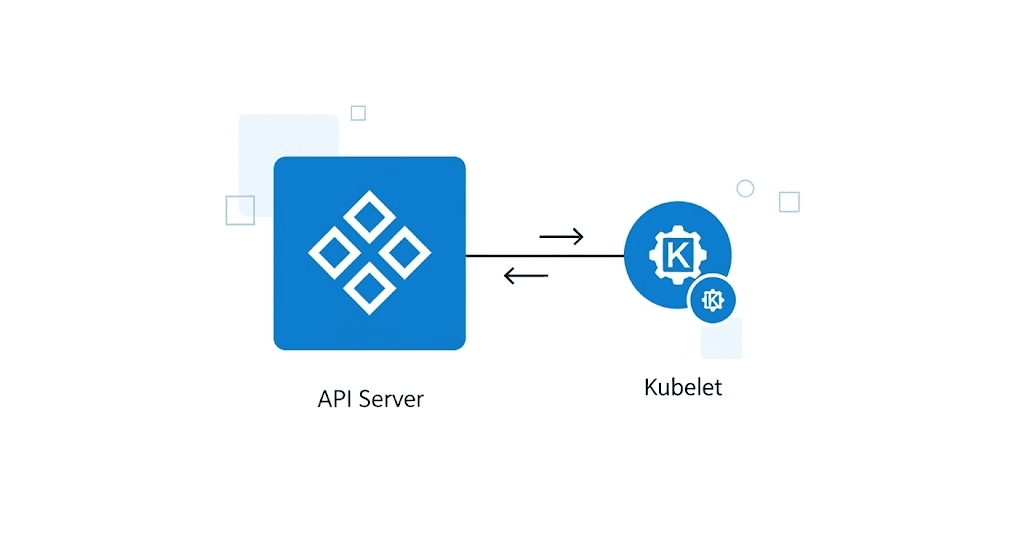

Etcd uses the Raft consensus algorithm to ensure data consistency across the cluster (typically 3 or 5 nodes).

Leader Election: One node is elected leader; all writes must go through it.

Replication: The leader replicates the log entry to followers.

Persistence (The Bottleneck): Before confirming a write, etcd must persist the data to disk (Write-Ahead Log or WAL) using an

fsyncsystem call.Consensus: Once a majority (quorum) confirms the write, the request succeeds.

Because every state change requires an fsync to disk, disk latency is the critical path. If your disk is slow, fsync takes longer, the leader blocks, and the entire Kubernetes control plane slows down. This is why "fast SSDs" are non-negotiable for etcd.

Why Test?

etcd stores the entire state of a Kubernetes cluster:

Cluster configuration

Pod/Service/Deployment definitions

Secrets and ConfigMaps

Custom Resources

If etcd is slow, the entire cluster is affected:

API Server response becomes slow

Controllers cannot update state

Pod scheduling is delayed

Watch operations timeout

Context: etcd is a single point of failure. etcd performance determines the performance of the entire cluster.

Prerequisites

Access Methods

Option 1: SSH into control plane node (recommended for production)

ssh control-plane-node

Option 2: kubectl exec into etcd pod

kubectl exec -it etcd-<node-name> -n kube-system -- sh

Install Tools

# Download etcd binaries

ETCD_VER=v3.5.4

curl -L https://github.com/etcd-io/etcd/releases/download/${ETCD_VER}/etcd-${ETCD_VER}-linux-amd64.tar.gz -o /tmp/etcd.tar.gz

tar xzvf /tmp/etcd.tar.gz -C /tmp --strip-components=1

# Install tools

sudo mv /tmp/etcdctl /usr/local/bin/

sudo mv /tmp/benchmark /usr/local/bin/

# Verify

etcdctl version

etcd Endpoints and Certificates

Get information from kubeadm cluster:

# Endpoints

kubectl get endpoints -n kube-system etcd -o jsonpath='{.subsets[*].addresses[*].ip}'

# Certificates (usually located here)

/etc/kubernetes/pki/etcd/

├── ca.crt

├── server.crt

└── server.key

Reference: etcd security

Test Scenarios

1. Smoke Test

Purpose: Verify connectivity and basic operations.

# Set environment variables

export ETCDCTL_API=3

export ETCDCTL_ENDPOINTS="https://10.10.10.1:2379,https://10.10.10.2:2379,https://10.10.10.3:2379"

export ETCDCTL_CACERT=/etc/kubernetes/pki/etcd/ca.crt

export ETCDCTL_CERT=/etc/kubernetes/pki/etcd/server.crt

export ETCDCTL_KEY=/etc/kubernetes/pki/etcd/server.key

# Run smoke test

./scripts/etcdctl/smoke.sh

Script performs:

# Member list

etcdctl member list

# Endpoint health

etcdctl endpoint health

# Endpoint status

etcdctl endpoint status

# Basic put/get/delete

etcdctl put /perf-test/key "value"

etcdctl get /perf-test/key

etcdctl del /perf-test/key

Expected output:

All members healthy

1 leader present

Put/get/delete successful

2. etcdctl Benchmark

Purpose: Measure client-side performance with realistic load patterns.

./scripts/etcdctl/etcdctl-tool.sh

Test phases:

| Phase | Rate | Clients | Duration |

| Medium write | 1,000 req/s | 200 | 60s |

| Heavy write | 8,000 req/s | 500 | 60s |

| Heavy read | 15,000 req/s | 1,000 | 60s |

Expected output:

| Metric | 3-node cluster | 5+ node cluster |

| Write latency P99 | < 50ms | < 25ms |

| Read latency P99 | < 15ms | < 5ms |

| Write throughput | 5k-10k req/s | 15k-30k req/s |

| Read throughput | 20k-50k req/s | 80k-150k req/s |

3. Benchmark Tool Test

Purpose: Measure raw database performance (server-side).

./scripts/benchmark/benchmark-tool.sh

Test types:

| Test | Description | Command |

| Sequential write | Single client writes | benchmark --conns=1 --clients=1 |

| Concurrent write | Multi-client writes | benchmark --conns=100 --clients=1000 |

| Read (linearizable) | Strong consistency reads | benchmark --consistency=l |

| Read (serializable) | Weak consistency reads | benchmark --consistency=s |

Cleanup

After testing, delete test data:

./scripts/benchmark/clean-and-recovery.sh

Metrics to Monitor

In Grafana (import grafana-dashboard/k8s-system-etcd.json):

| Metric | PromQL | Meaning |

| WAL fsync duration | histogram_quantile(0.99, etcd_disk_wal_fsync_duration_seconds_bucket) | Disk write performance |

| Backend commit | histogram_quantile(0.99, etcd_disk_backend_commit_duration_seconds_bucket) | Database commit time |

| Leader elections | increase(etcd_server_leader_changes_seen_total[1h]) | Cluster stability |

| DB size | etcd_mvcc_db_total_size_in_bytes | Database size |

Thresholds:

WAL fsync P99 > 10ms → Disk too slow

Leader changes > 0/hour → Network or disk issues

DB size > 6GB → Need compact/defrag

Reference: etcd metrics

Troubleshooting

Connection refused:

# Check endpoints

etcdctl endpoint health

# Verify firewall, certificates

High latency:

# Check disk I/O

iostat -x 1

# Check etcd logs

kubectl logs etcd-<node> -n kube-system | grep -i slow

Database too large:

# Compact and defrag

etcdctl compact $(etcdctl get / --limit 1 --rev | head -1)

etcdctl defrag

Configuration

Update scripts with cluster info:

# scripts/etcdctl/etcdctl-tool.sh

ETCD_HOSTS="10.10.10.1:2379,10.10.10.2:2379,10.10.10.3:2379"

OPTIONS="--cacert=/path/to/ca.crt --cert=/path/to/client.crt --key=/path/to/client.key"