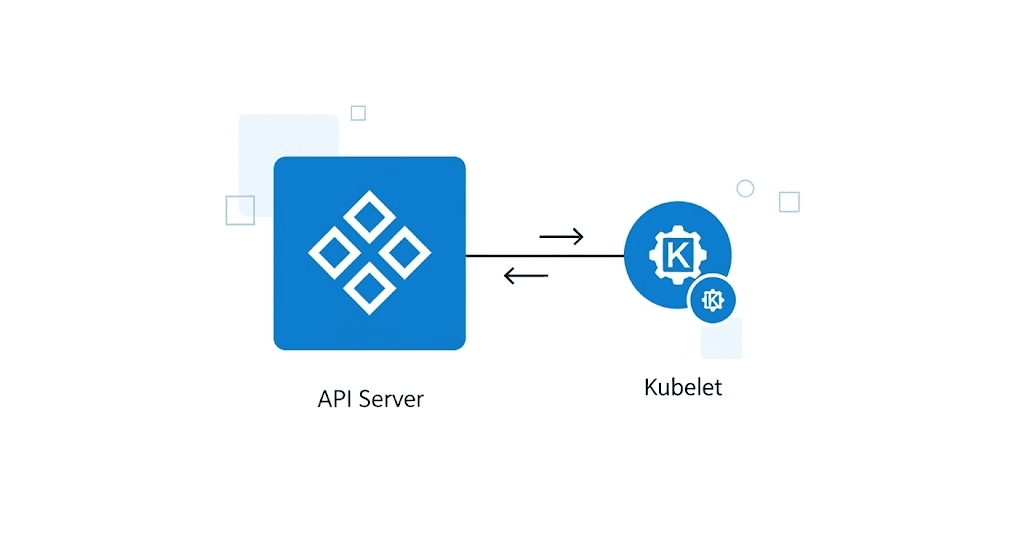

Kubernetes API Server & Kubelet Performance Testing

Benchmark Control Plane and Worker in Kubernetes cluster using kube-burner

In Kubernetes context, while the API Server is the brain, the Kubelet is the muscle that actually runs your workloads. Both need to be stress-tested to guarantee successful deployments.

To test this, we use kube-burner, a tool designed to stress the control plane by creating, updating, and deleting thousands of objects, while simultaneously measuring how fast Kubelets can pick up and run these workloads.

Why Test?

The API Server is the central point for all requests in a Kubernetes cluster. If the API Server cannot handle the load, the entire cluster is affected:

Pod scheduling becomes slow or fails

Service discovery stops working

kubectl commands timeout

Controllers cannot reconcile

Context: When a cluster has many workloads, the API Server must handle thousands of requests/second from:

Controllers (deployment, replicaset, daemonset...)

Kubelet (node status, pod status)

Users (kubectl, CI/CD pipelines)

Applications (in-cluster clients)

Install kube-burner

# Download and install

curl -L https://github.com/cloud-bulldozer/kube-burner/releases/latest/download/kube-burner-linux-amd64.tar.gz | tar xz

sudo mv kube-burner /usr/local/bin/

# Verify

kube-burner version

Reference: kube-burner installation

Test Scenarios

1. Smoke Test

Purpose: Basic validation, baseline performance.

Input:

10 namespaces

50 objects (secrets, deployments)

QPS: 5, Burst: 5

Run test:

kube-burner init -c api-server/smoke.yaml

Expected output:

| Metric | Target |

|---|---|

| Success rate | > 99% |

| P99 latency | < 500ms |

| Duration | ~3-5 min |

2. Load Test (API Intensive)

Purpose: Evaluate API Server capacity under production load.

Input:

30 namespaces

1,800 objects (deployments, configmaps, secrets, services)

QPS: 25, Burst: 30

3 phases: Create → Patch → Delete

Run test:

kube-burner init -c api-server/api-intensive.yml

Test phases:

| Phase | Action | Duration |

|---|---|---|

| 1 | Object creation (1,800 objects) | ~15 min |

| 2 | Object patching (JSON patch, strategic merge) | ~5 min |

| 3 | Cleanup (cascade delete) | ~10 min |

Expected output:

| Cluster Size | API Server QPS | P99 Latency |

|---|---|---|

| 3-5 nodes | 500-1,500 | < 1s |

| 5-20 nodes | 1,500-5,000 | < 500ms |

| 20+ nodes | 5,000-15,000 | < 200ms |

3. Kubelet Density Test

Purpose: Evaluate cluster scheduling and pod lifecycle capabilities.

Run test:

# Web application workload

kube-burner init -c kubelet-density-cni/kubelet-density-cni.yml

# Database workload

kube-burner init -c kubelet-density-database/kubelet-density-database.yml

Expected output:

| Metric | Target |

|---|---|

| Pod startup time | < 30s |

| Scheduling latency | < 5s |

| Pod churn rate | Stable |

Metrics to Monitor

In Grafana (import grafana-dashboard/k8s-system-api-server.json):

| Metric | PromQL | Meaning |

|---|---|---|

| Request latency | histogram_quantile(0.99, apiserver_request_duration_seconds_bucket) |

P99 response time |

| Request rate | sum(rate(apiserver_request_total[5m])) |

QPS |

| Error rate | sum(rate(apiserver_request_total{code=~"5.."}[5m])) |

Server errors |

| etcd latency | histogram_quantile(0.99, etcd_request_duration_seconds_bucket) |

Backend latency |

Parameter Tuning

Adjust in config file based on cluster size:

# Small cluster (3-5 nodes)

jobs:

- name: api-test

qps: 10

burst: 20

jobIterations: 10

replicas: 5

# Large cluster (20+ nodes)

jobs:

- name: api-test

qps: 100

burst: 200

jobIterations: 50

replicas: 50

Troubleshooting

Pods stuck Pending:

→ Reduce replicas or increase cluster resources.

High API latency:

kubectl top pods -n kube-system

kubectl logs kube-apiserver-<node> -n kube-system | grep -i error

→ Check etcd performance, reduce qps/burst.

Cleanup failed:

# Get list of namespaces to delete

kubectl get namespace -l kube-burner-job=<job-name>

# Delete each namespace

kubectl delete namespace <namespace-name>

# Or use xargs to delete in bulk

kubectl get namespace -l kube-burner-job=<job-name> -o name | xargs kubectl delete